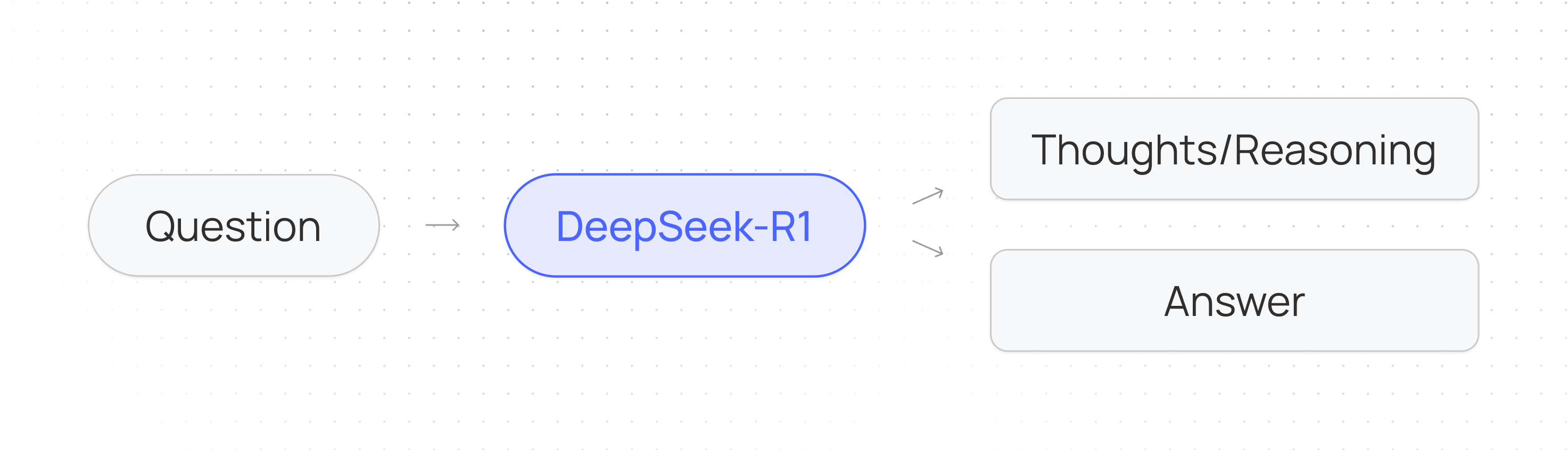

Reasoning models like DeepSeek-R1 have been trained to think step-by-step before responding with an answer. As a result they excel at complex reasoning tasks such as coding, mathematics, planning, puzzles, and agent workflows. Given a question in the form of an input prompt DeepSeek-R1 outputs both its chain of thought/reasoning process in the form of thinking tokens betweenDocumentation Index

Fetch the complete documentation index at: https://docs.together.ai/llms.txt

Use this file to discover all available pages before exploring further.

<think> tags and the answer.

Because these models use more computation/tokens to perform better reasoning they produce longer outputs and can be slower and more expensive than their non-reasoning counterparts.

How to use DeepSeek-R1 API

Since these models produce longer responses we’ll stream in tokens instead of waiting for the whole response to complete.DeepSeek-R1 Use-cases

- Benchmarking other LLMs: Evaluates LLM responses with contextual understanding, particularly useful in fields requiring critical validation like law, finance and healthcare.

- Code Review: Performs comprehensive code analysis and suggests improvements across large codebases

- Strategic Planning: Creates detailed plans and selects appropriate AI models based on specific task requirements

- Document Analysis: Processes unstructured documents and identifies patterns and connections across multiple sources

- Information Extraction: Efficiently extracts relevant data from large volumes of unstructured information, ideal for RAG systems

- Ambiguity Resolution: Interprets unclear instructions effectively and seeks clarification when needed rather than making assumptions

Managing context and costs

When working with reasoning models, it’s crucial to maintain adequate space in the context window to accommodate the model’s reasoning process. The number of reasoning tokens generated can vary based on the complexity of the task - simpler problems may only require a few hundred tokens, while more complex challenges could generate tens of thousands of reasoning tokens. Cost/Latency management is an important consideration when using these models. To maintain control over resource usage, you can implement limits on the total token generation using themax_tokens parameter.

While limiting tokens can reduce costs/latency, it may also impact the model’s ability to fully reason through complex problems. Therefore, it’s recommended to adjust these parameters based on your specific use case and requirements, finding the optimal balance between thorough reasoning and resource utilization.

General limitations

Currently, the capabilities of DeepSeek-R1 fall short of DeepSeek-V3 in general purpose tasks such as:- Function calling

- Multi-turn conversation

- Complex role-playing

- JSON output.

Prompting DeepSeek-R1

Reasoning models like DeepSeek-R1 should be used differently than standard non-reasoning models to get optimal results. Think of DeepSeek-R1 as a senior problem-solver. Provide high-level objectives (e.g., “Analyze this data and identify trends”) and let it determine the methodology.- Strengths: excels at open-ended reasoning, multi-step logic, and inferring unstated requirements.

- Over-prompting (e.g., micromanaging steps) can limit its ability to leverage advanced reasoning.

- Under-prompting (e.g., vague goals like “Help with math”) may reduce specificity. Balance clarity with flexibility.

- Clear and specific prompts: Write your instructions in plain language, clearly stating what you want. Complex, lengthy prompts often lead to less effective results.

- Sampling parameters: Set the

temperaturewithin the range of 0.5-0.7 (0.6 is recommended) to prevent endless repetitions or incoherent outputs. Also, atop-pof 0.95 is recommended. - No system prompt: Avoid adding a system prompt; all instructions should be contained within the user prompt.

- No few-shot prompting: Do not provide examples in the prompt, as this consistently degrades model performance. Rather, describe in detail the problem, task, and output format you want the model to accomplish. If you do want to provide examples, ensure that they align very closely with your prompt instructions.

- Structure your prompt: Break up different parts of your prompt using clear markers like XML tags, markdown formatting, or labeled sections. This organization helps ensure the model correctly interprets and addresses each component of your request.

- Set clear requirements: When your request has specific limitations or criteria, state them explicitly (like “Each line should take no more than 5 seconds to say…”). Whether it’s budget constraints, time limits, or particular formats, clearly outline these parameters to guide the model’s response.

- Clearly describe output: Paint a clear picture of your desired outcome. Describe the specific characteristics or qualities that would make the response exactly what you need, allowing the model to work toward meeting those criteria.

- Majority voting for responses: When evaluating model performance, it is recommended to generate multiple solutions and then use the most frequent results.

- No chain-of-thought prompting: Since these models always reason prior to answering the question, it is not necessary to tell them to “Reason step by step…”

- Math tasks: For mathematical problems, it is advisable to include a directive in your prompt such as: “Please reason step by step, and put your final answer within

\boxed{}.” - Forcing

<think>: On rare occasions, DeepSeek-R1 tends to bypass the thinking pattern, which can adversely affect the model’s performance. In this case, the response will not start with a<think>tag. If you see this problem, try telling the model to start with the<think>tag.