Documentation Index

Fetch the complete documentation index at: https://docs.together.ai/llms.txt

Use this file to discover all available pages before exploring further.

This quickstart walks through a full fine-tuning run on Together AI: preparing a conversational dataset (CoQA), launching a LoRA job on Qwen3 8B, monitoring progress, running inference on the result, and comparing it to the base model. For background on what fine-tuning is and when to use it, see the overview.

A runnable notebook version lives on GitHub: fine-tuning guide notebook.

Prerequisites

-

Create an account. Sign up at Together AI and generate an API key.

-

Set your API key.

export TOGETHER_API_KEY=<your-key>

-

Install the libraries.

pip install -U together datasets transformers tqdm

Choose a base model

The first step is picking a model to fine-tune:

- Base models are trained on a wide variety of text and make broad predictions.

- Instruct models are trained on instruction-response pairs and tend to do better on specific tasks.

For a first run, start with an instruction-tuned model:

Qwen/Qwen3-8B for simpler tasks.Qwen/Qwen3-32B for more complex datasets and domains.

As a rough guide: start with an 8B model for datasets under roughly 1,000 examples or simpler tasks; use a 32B model for larger datasets or tasks requiring complex reasoning.

Base models with the -Reference suffix (for example, meta-llama/Meta-Llama-3.1-8B-Instruct-Reference) can be fine-tuned but cannot be deployed as dedicated endpoints. To verify deployability before training, run client.endpoints.list_hardware(model="<base-model>"). A 404 means the base can’t host a fine-tune.

Step 1: Prepare your dataset

Fine-tuning requires data formatted in a specific way. This walkthrough uses a conversational dataset so the fine-tuned model can improve on multi-turn conversations.

Together AI supports several data formats:

-

Conversational data. A JSON object per line, where each object contains a list of conversation turns under the

messages key. Each message must have a role (system, user, or assistant) and content. See conversational data.

{

"messages": [

{ "role": "system", "content": "You are a helpful assistant." },

{ "role": "user", "content": "Hello!" },

{ "role": "assistant", "content": "Hi! How can I help you?" }

]

}

-

Instruction data. For instruction-based tasks with prompt-completion pairs. See instruction data.

-

Preference data. For preference-based fine-tuning. See preference data.

-

Generic text data. For plain text completion tasks. See generic text data.

Together AI supports two file formats:

- JSONL. Simpler and works for most cases.

- Parquet. Stores pre-tokenized data and gives you the flexibility to specify custom attention masks and labels (loss masking).

Default to JSONL unless you need custom tokenization or specific loss masking, in which case use Parquet.

Example: preparing the CoQA dataset

Here’s an example of transforming the CoQA dataset into the required chat format:

from datasets import load_dataset

## Load the dataset

coqa_dataset = load_dataset("stanfordnlp/coqa")

## The system prompt, if present, must always be at the beginning

system_prompt = (

"Read the story and extract answers for the questions.\nStory: {}"

)

def map_fields(row):

# Create system prompt

messages = [

{"role": "system", "content": system_prompt.format(row["story"])}

]

# Add user and assistant messages

for q, a in zip(row["questions"], row["answers"]["input_text"]):

messages.append({"role": "user", "content": q})

messages.append({"role": "assistant", "content": a})

return {"messages": messages}

## Transform the data using the mapping function

train_messages = coqa_dataset["train"].map(

map_fields,

remove_columns=coqa_dataset["train"].column_names,

)

## Save data to JSON file

train_messages.to_json("coqa_prepared_train.jsonl")

- With conversational or instruction data, set

train_on_inputs (bool or auto) to mask user messages in conversational data or prompts in instruction data.

- With conversational data, assign weights to specific messages to mask them.

- With pre-tokenized data (Parquet), set the

label for specific tokens to -100 to mask them.

Check and upload your data

Once your data is prepared, verify it’s correctly formatted and upload it to Together AI. check_file validates the JSONL schema client-side before uploading, catching formatting errors without consuming API quota.

from together import Together

import os

import json

TOGETHER_API_KEY = os.getenv("TOGETHER_API_KEY")

WANDB_API_KEY = os.getenv(

"WANDB_API_KEY"

) # Optional, for logging fine-tuning to wandb

## Check the file format

from together.utils import check_file

client = Together(api_key=TOGETHER_API_KEY)

sft_report = check_file("coqa_prepared_train.jsonl")

print(json.dumps(sft_report, indent=2))

assert sft_report["is_check_passed"] == True

## Upload the data to Together

train_file_resp = client.files.upload(

"coqa_prepared_train.jsonl", purpose="fine-tune", check=True

)

print(train_file_resp.id) # Save this ID for starting your fine-tuning job

{

"is_check_passed": true,

"message": "Checks passed",

"found": true,

"file_size": 23777505,

"utf8": true,

"line_type": true,

"text_field": true,

"key_value": true,

"has_min_samples": true,

"num_samples": 7199,

"load_json": true,

"filetype": "jsonl"

}

Step 2: Start the fine-tuning job

With the data uploaded, launch the fine-tuning job with client.fine_tuning.create().

Key parameters

| Parameter | Required | Type | Default | Notes |

|---|

training_file | Required | string | — | File ID returned by the upload step. |

model | Required | string | — | Base model to fine-tune. |

lora | Optional | bool | true | Set to false for full fine-tuning. |

n_epochs | Optional | int | 1 | Number of passes through the training dataset. |

learning_rate | Optional | float | 0.00001 | Step size for weight updates. |

batch_size | Optional | int or "max" | "max" | Examples per training step. |

warmup_ratio | Optional | float | 0.0 | Fraction of steps for learning-rate warmup. |

weight_decay | Optional | float | 0.0 | L2 regularization strength. |

max_grad_norm | Optional | float | 1.0 | Gradient clipping threshold. Set to 0 to disable. |

train_on_inputs | Optional | bool or "auto" | "auto" | Whether to mask user/prompt tokens from the loss. "auto" is recommended. |

suffix | Optional | string | — | Up to 64 characters appended to the output model name. |

n_checkpoints | Optional | int | 1 | Number of intermediate checkpoints saved during training. |

n_evals | Optional | int | 0 | Number of evaluations against validation_file during training. |

hf_api_token | Optional | string | — | Only required when from_hf_model points to a private HuggingFace repository. Omit for public models. |

hf_api_token is only needed when your base model is a private HuggingFace repository. Omit it entirely when fine-tuning Together AI-hosted models or public HuggingFace models; passing a dummy value can cause a 400 error.

LoRA fine-tuning (recommended)

ft_resp = client.fine_tuning.create(

training_file=train_file_resp.id,

model="Qwen/Qwen3-8B",

train_on_inputs="auto",

n_epochs=3,

n_checkpoints=1,

wandb_api_key=WANDB_API_KEY, # Optional, for visualization

lora=True, # Default True

warmup_ratio=0,

learning_rate=1e-5,

suffix="qwen3_8b_demo",

)

print(ft_resp.id) # Save this job ID for monitoring

Full fine-tuning

For full fine-tuning, set lora to False:

## Using Python

ft_resp = client.fine_tuning.create(

training_file=train_file_resp.id,

model="Qwen/Qwen3-8B",

train_on_inputs="auto",

n_epochs=3,

n_checkpoints=1,

warmup_ratio=0,

lora=False, # Must be specified as False, defaults to True

learning_rate=1e-5,

suffix="qwen3_8b_full_finetune",

)

ft-d1522ffb-8f3e #fine-tuning job id

Each call to fine_tuning.create() starts a new independent job and incurs cost once training begins. If you get an error and need to retry, call client.fine_tuning.list() first to check whether a job already exists before creating another.

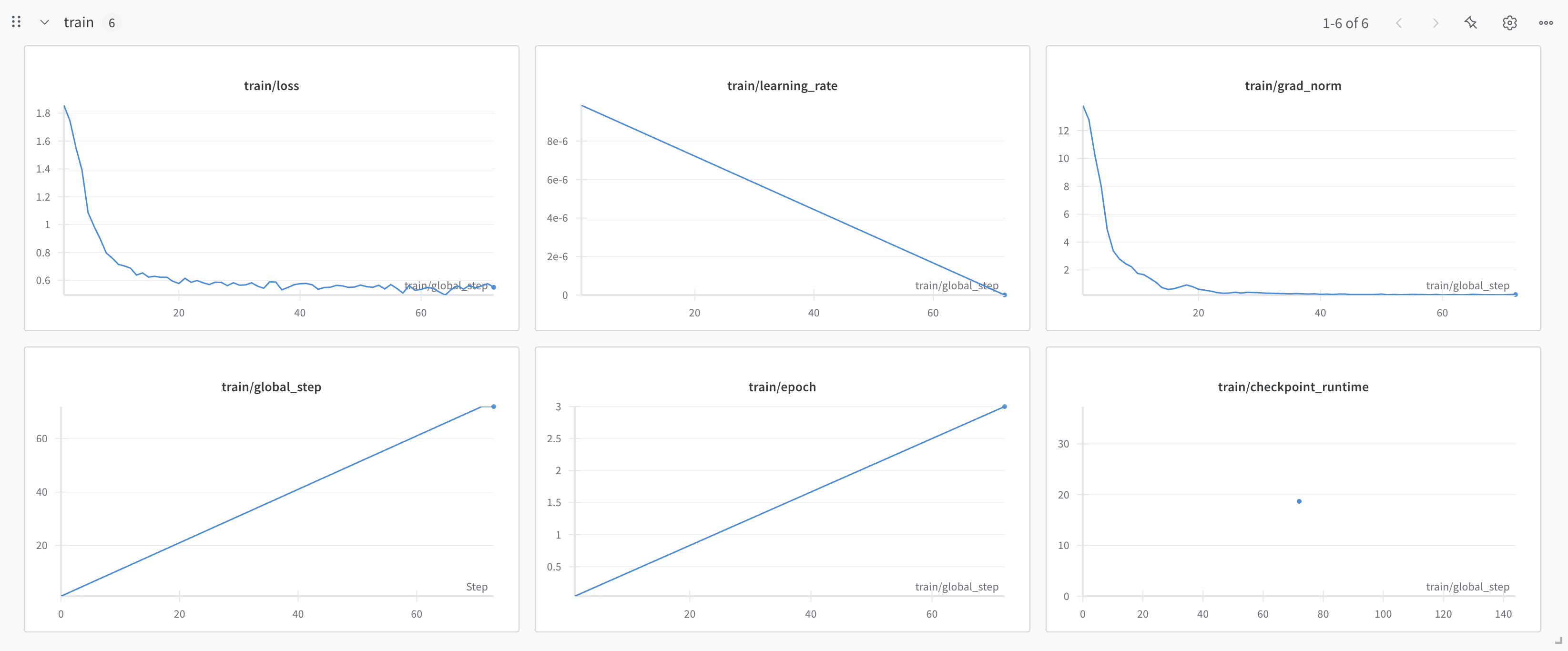

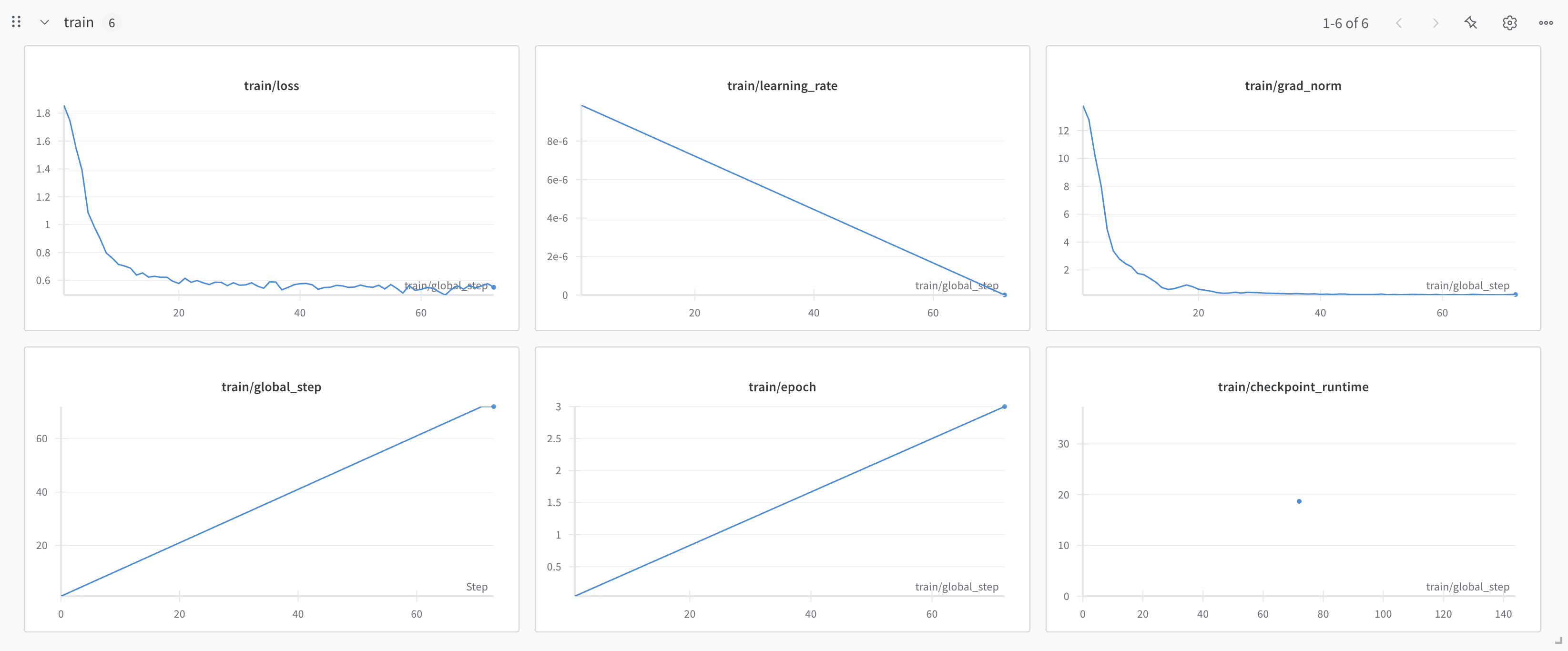

Step 3: Monitor the job

Fine-tuning takes time, depending on the model size, dataset size, and hyperparameters. Your job progresses through several states: pending → queued → running → uploading → completed.

Queue wait time varies by platform load and is typically under an hour. Once a job is running, you can estimate remaining training time by multiplying the first epoch’s duration by n_epochs; the epoch duration appears in the event log. Save your job ID: you can resume monitoring at any time without re-uploading data.

- List all jobs:

client.fine_tuning.list()

- Status of a job:

client.fine_tuning.retrieve(id=ft_resp.id)

- List all events for a job:

client.fine_tuning.list_events(id=ft_resp.id) - retrieves logs and events generated during the job

- Training metrics:

client.fine_tuning.list_metrics(id=ft_resp.id) — retrieves per-step loss, learning rate, and other training metrics. See Training metrics.

- Cancel job:

client.fine_tuning.cancel(id=ft_resp.id)

- Download fine-tuned model:

client.fine_tuning.download(id=ft_resp.id) (v1) or client.fine_tuning.with_streaming_response.content(ft_id=ft_resp.id) (v2)

Once the job is complete (status == 'completed'), the response from retrieve contains the name of your newly created fine-tuned model in the x_model_output_name field. It follows the pattern <your-account>/<base-model-name>:<suffix>:<job-id>. This field is empty while the job is pending, queued, or running.

Check status via the API

## Check status of the job

resp = client.fine_tuning.retrieve(ft_resp.id)

print(resp.status)

## This loop will print the logs of the job thus far

for event in client.fine_tuning.list_events(id=ft_resp.id).data:

print(event.message)

Fine tune request created

Job started at Thu Apr 3 03:19:46 UTC 2025

Model data downloaded for togethercomputer/Meta-Llama-3.1-8B-Instruct-Reference__TOG__FT at Thu Apr 3 03:19:48 UTC 2025

Data downloaded for togethercomputer/Meta-Llama-3.1-8B-Instruct-Reference__TOG__FT at 2025-04-03T03:19:55.595750

WandB run initialized.

Training started for model togethercomputer/Meta-Llama-3.1-8B-Instruct-Reference__TOG__FT

Epoch completed, at step 24

Epoch completed, at step 48

Epoch completed, at step 72

Training completed for togethercomputer/Meta-Llama-3.1-8B-Instruct-Reference__TOG__FT at Thu Apr 3 03:27:55 UTC 2025

Uploading output model

Compressing output model

Model compression complete

Model upload complete

Job finished at Thu Apr 3 03:31:33 UTC 2025

Resume monitoring by job ID

If your session was interrupted, resume monitoring without re-uploading data:

Resume monitoring by job ID

If your session was interrupted, resume monitoring without re-uploading data:

job_id = "ft-xxxx-yyyy" # saved from your previous session

status = client.fine_tuning.retrieve(id=job_id)

print(status.status)

print(status.x_model_output_name) # non-empty once status == "completed"

## Run delete

resp = client.fine_tuning.delete(ft_resp.id)

print(resp)

Step 4: Use the fine-tuned model

Once the job completes, your model is available for inference.

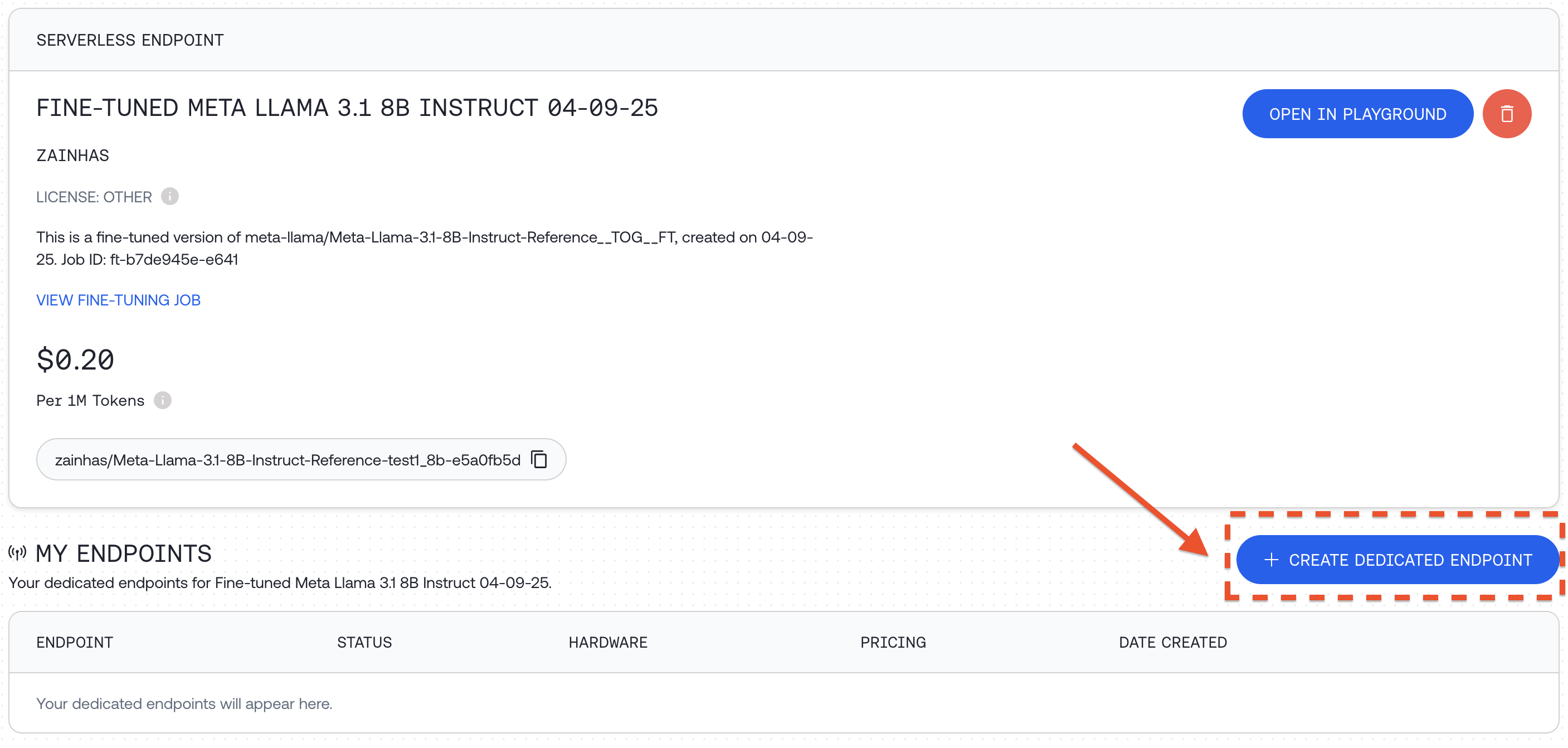

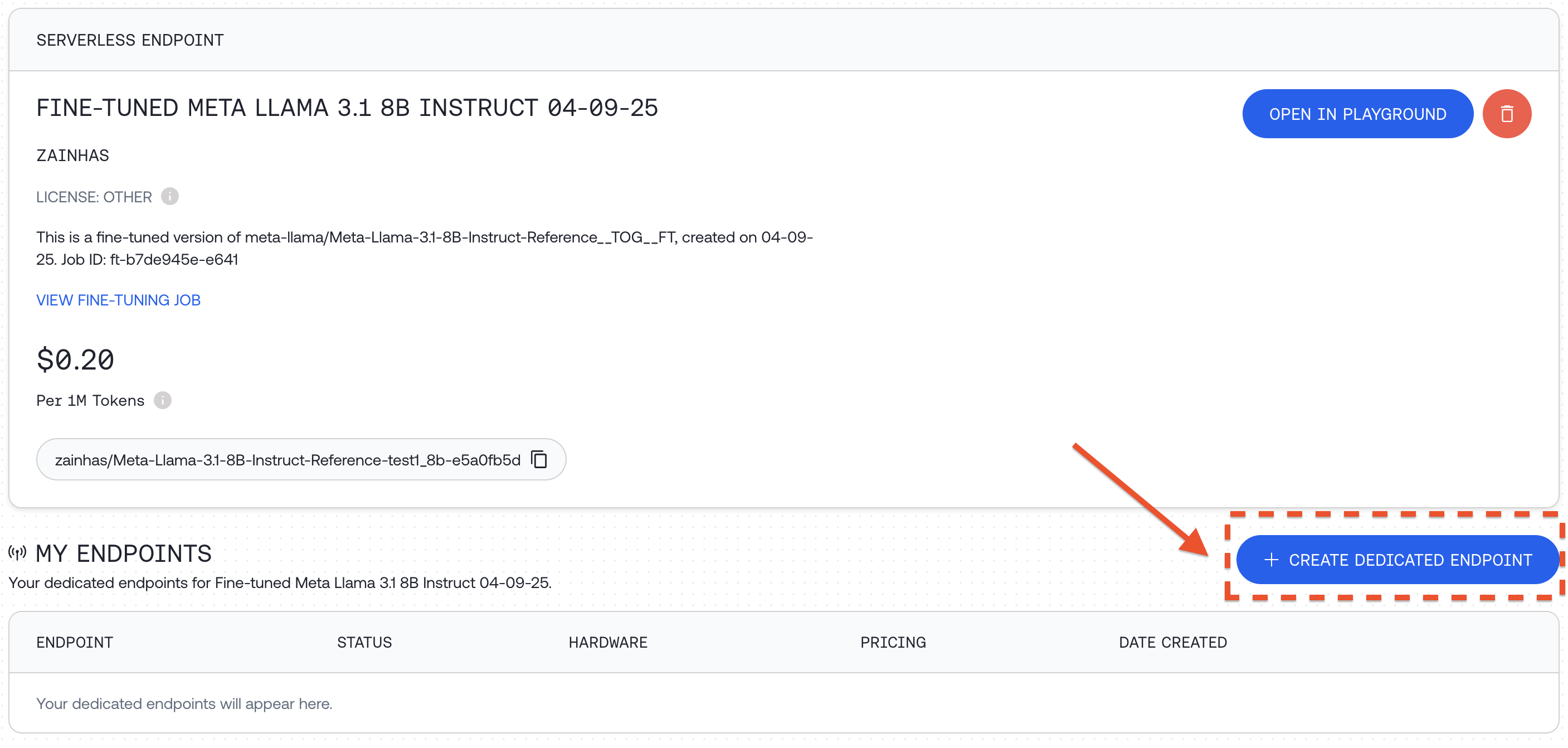

Deploy a dedicated endpoint

To run your fine-tuned model, deploy it on a dedicated endpoint:

-

Visit your models dashboard.

-

Select + Create dedicated endpoint for your fine-tuned model.

-

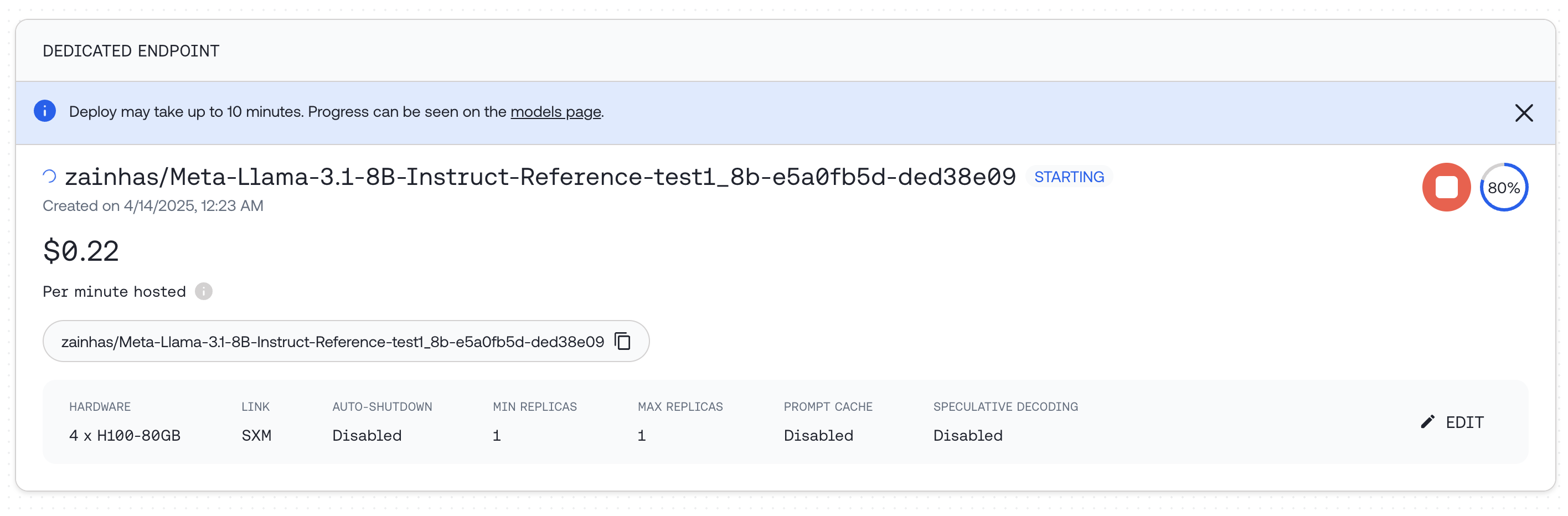

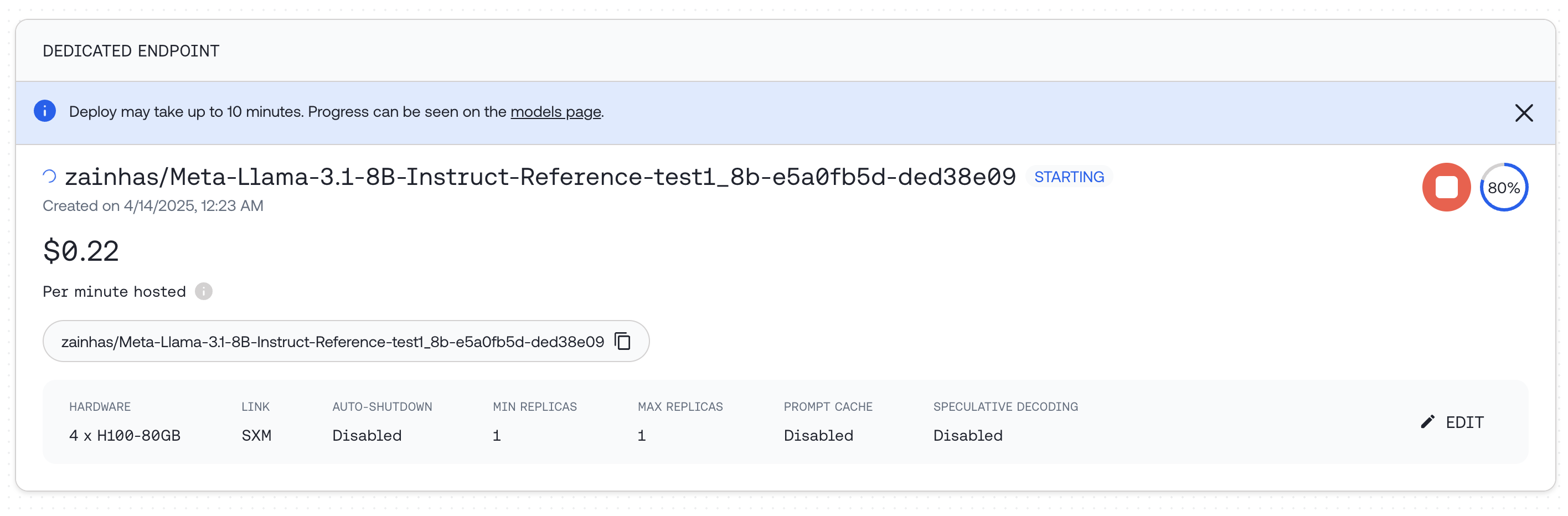

Select hardware configuration and scaling options, including min and max replicas (which set the maximum QPS the deployment can support), and select Deploy.

Select hardware configuration and scaling options, including min and max replicas (which set the maximum QPS the deployment can support), and select Deploy.

You can also deploy programmatically:

Dedicated endpoints bill per minute even when idle. Delete the endpoint when you’re done to stop charges. For the full API, see

endpoints reference.

import time

# 1. Retrieve the output model name from the completed job

status = client.fine_tuning.retrieve(id=ft_resp.id)

output_model = status.x_model_output_name # set once status == "completed"

# 2. Preflight: confirm the base model can host a fine-tune.

# A 404 here means the base (often a `-Reference` model) can't be deployed

# as a dedicated endpoint. Pick a different base before retrying.

client.endpoints.list_hardware(model=status.model)

# 3. Create a dedicated endpoint

endpoint = client.endpoints.create(

display_name="My fine-tuned model",

model=output_model,

hardware="4x_nvidia_h100_80gb_sxm",

autoscaling={"min_replicas": 1, "max_replicas": 1},

)

print(f"Endpoint created: {endpoint.id}")

# 4. Poll until the endpoint is ready (state == "STARTED")

while True:

ep = client.endpoints.retrieve(endpoint.id)

print(f" Endpoint state: {ep.state}")

if ep.state == "STARTED":

break

if ep.state in ("FAILED", "STOPPED"):

raise RuntimeError(f"Endpoint ended with state: {ep.state}")

time.sleep(30)

# 5. Query the model

response = client.chat.completions.create(

model=endpoint.name,

messages=[{"role": "user", "content": "What is the capital of France?"}],

max_tokens=128,

)

print(response.choices[0].message.content)

# 6. Delete the endpoint when done to stop per-minute billing

client.endpoints.delete(endpoint.id)

print("Endpoint deleted")

If endpoint creation fails immediately with a generic “There was an issue starting your endpoint” message, the cause is almost always an incompatible base model rather than a transient hardware issue. Verify with the list_hardware preflight above and switch to a non--Reference base if it 404s.

For full endpoint management options, see Deploying a fine-tuned model.

For full endpoint management options, see Deploying a fine-tuned model.

Step 5: Evaluate the fine-tuned model

To measure the impact of fine-tuning, compare responses from the fine-tuned model and the base model on the same prompts in a held-out test set.

The base model may not be callable as-is. Many fine-tuneable base models, including

Qwen/Qwen3-8B used in this walkthrough, are not available on the serverless API. Calling them directly with

chat.completions.create(model="Qwen/Qwen3-8B", ...) returns

Unable to access non-serverless model. To run the comparison, deploy the base on its own dedicated endpoint, evaluate against

endpoint.name, and delete the endpoint when finished. Serverless bases (those with a non-zero per-token price in

the models dashboard) can be called directly with no extra endpoint.

Use a validation set during training

Pass a validation set when starting your fine-tuning job:

response = client.fine_tuning.create(

training_file="your-training-file-id",

validation_file="your-validation-file-id",

n_evals=10, # Number of times to evaluate on validation set

model="Qwen/Qwen3-8B",

)

Post-training evaluation example

Here’s a comprehensive example of evaluating models after fine-tuning, using the CoQA dataset:

- First, load a portion of the validation dataset:

coqa_dataset_validation = load_dataset(

"stanfordnlp/coqa",

split="validation[:50]",

)

- Define a function to generate answers from both models:

from tqdm.auto import tqdm

from multiprocessing.pool import ThreadPool

base_model = "Qwen/Qwen3-8B" # Original model

status = client.fine_tuning.retrieve(ft_resp.id)

finetuned_model = status.x_model_output_name # set once status == "completed"

def get_model_answers(model_name):

"""

Generate model answers for a given model name using a dataset of questions and answers.

Args:

model_name (str): The name of the model to use for generating answers.

Returns:

list: A list of lists, where each inner list contains the answers generated by the model.

"""

model_answers = []

system_prompt = (

"Read the story and extract answers for the questions.\nStory: {}"

)

def get_answers(data):

answers = []

messages = [

{

"role": "system",

"content": system_prompt.format(data["story"]),

}

]

for q, true_answer in zip(

data["questions"],

data["answers"]["input_text"],

):

try:

messages.append({"role": "user", "content": q})

response = client.chat.completions.create(

messages=messages,

model=model_name,

max_tokens=64,

)

answer = response.choices[0].message.content

answers.append(answer)

except Exception:

answers.append("Invalid Response")

return answers

# We'll use 8 threads to generate answers faster in parallel

with ThreadPool(8) as pool:

for answers in tqdm(

pool.imap(get_answers, coqa_dataset_validation),

total=len(coqa_dataset_validation),

):

model_answers.append(answers)

return model_answers

- Generate answers from both models:

base_answers = get_model_answers(base_model)

finetuned_answers = get_model_answers(finetuned_model)

- Define a function to calculate evaluation metrics:

import transformers.data.metrics.squad_metrics as squad_metrics

def get_metrics(pred_answers):

"""

Calculate the Exact Match (EM) and F1 metrics for predicted answers.

Args:

pred_answers (list): A list of predicted answers.

Returns:

tuple: A tuple containing EM score and F1 score.

"""

em_metrics = []

f1_metrics = []

for pred, data in tqdm(

zip(pred_answers, coqa_dataset_validation),

total=len(pred_answers),

):

for pred_answer, true_answer in zip(

pred, data["answers"]["input_text"]

):

em_metrics.append(

squad_metrics.compute_exact(true_answer, pred_answer)

)

f1_metrics.append(

squad_metrics.compute_f1(true_answer, pred_answer)

)

return sum(em_metrics) / len(em_metrics), sum(f1_metrics) / len(f1_metrics)

- Calculate and compare metrics:

## Calculate metrics for both models

em_base, f1_base = get_metrics(base_answers)

em_ft, f1_ft = get_metrics(finetuned_answers)

print(f"Base Model - EM: {em_base:.2f}, F1: {f1_base:.2f}")

print(f"Fine-tuned Model - EM: {em_ft:.2f}, F1: {f1_ft:.2f}")

| Qwen3 8B | EM | F1 |

|---|

| Original | 0.01 | 0.18 |

| Fine-tuned | 0.32 | 0.41 |

Advanced topics

Continue a fine-tuning job

You can continue training from a previous fine-tuning job:

response = client.fine_tuning.create(

training_file="your-new-training-file-id",

from_checkpoint="previous-finetune-job-id",

wandb_api_key="your-wandb-api-key",

)

- The output model name from the previous job

- Fine-tuning job ID

- A specific checkpoint step with the format

ft-...:{STEP_NUM}

To check all available checkpoints for a job:

tg fine-tuning list-checkpoints <FT_JOB_ID>

Training and validation split

To split your dataset into training and validation sets:

split_ratio=0.9 # Specify the split ratio for your training set

total_lines=$(wc -l < "your-datafile.jsonl")

split_lines=$((total_lines * split_ratio))

head -n $split_lines "your-datafile.jsonl" > "your-datafile-train.jsonl"

tail -n +$((split_lines + 1)) "your-datafile.jsonl" > "your-datafile-validation.jsonl"

Use a validation set during training

A validation set is a held-out dataset used to evaluate model performance during training on unseen data. Using a validation set helps monitor for overfitting and assists with hyperparameter tuning.

To use a validation set, provide validation_file and set n_evals to a number above 0:

response = client.fine_tuning.create(

training_file="your-training-file-id",

validation_file="your-validation-file-id",

n_evals=10, # Number of evaluations over the entire job

model="Qwen/Qwen3-8B",

)

Next steps