Overview

LoRA (Low-Rank Adaptation) enables efficient fine-tuning of large language models by training only a small set of additional parameters while keeping the original model weights frozen. This approach delivers several key advantages:- Reduced training costs: Trains fewer parameters than full fine-tuning, using less GPU memory

- Faster deployment: Produces compact adapter files that can be quickly shared and deployed

Quick start

This guide demonstrates how to fine-tune a model using LoRA. For a general introduction to fine-tuning on Together AI, see the fine-tuning overview.Prerequisites

- Together AI API key

- Training data in the JSONL format

Step 1: Upload Training Data

First, upload your training dataset to Together AI:Step 2: Create Fine-tuning Job

Launch a LoRA fine-tuning job using the uploaded file ID:Note: If you plan to use a validation set, make sure to set theOnce you submit the fine-tuning job you should be able to see the model--validation-fileand--n-evals(the number of evaluations over the entire job) parameters.--n-evalsneeds to be set as a number above 0 in order for your validation set to be used.

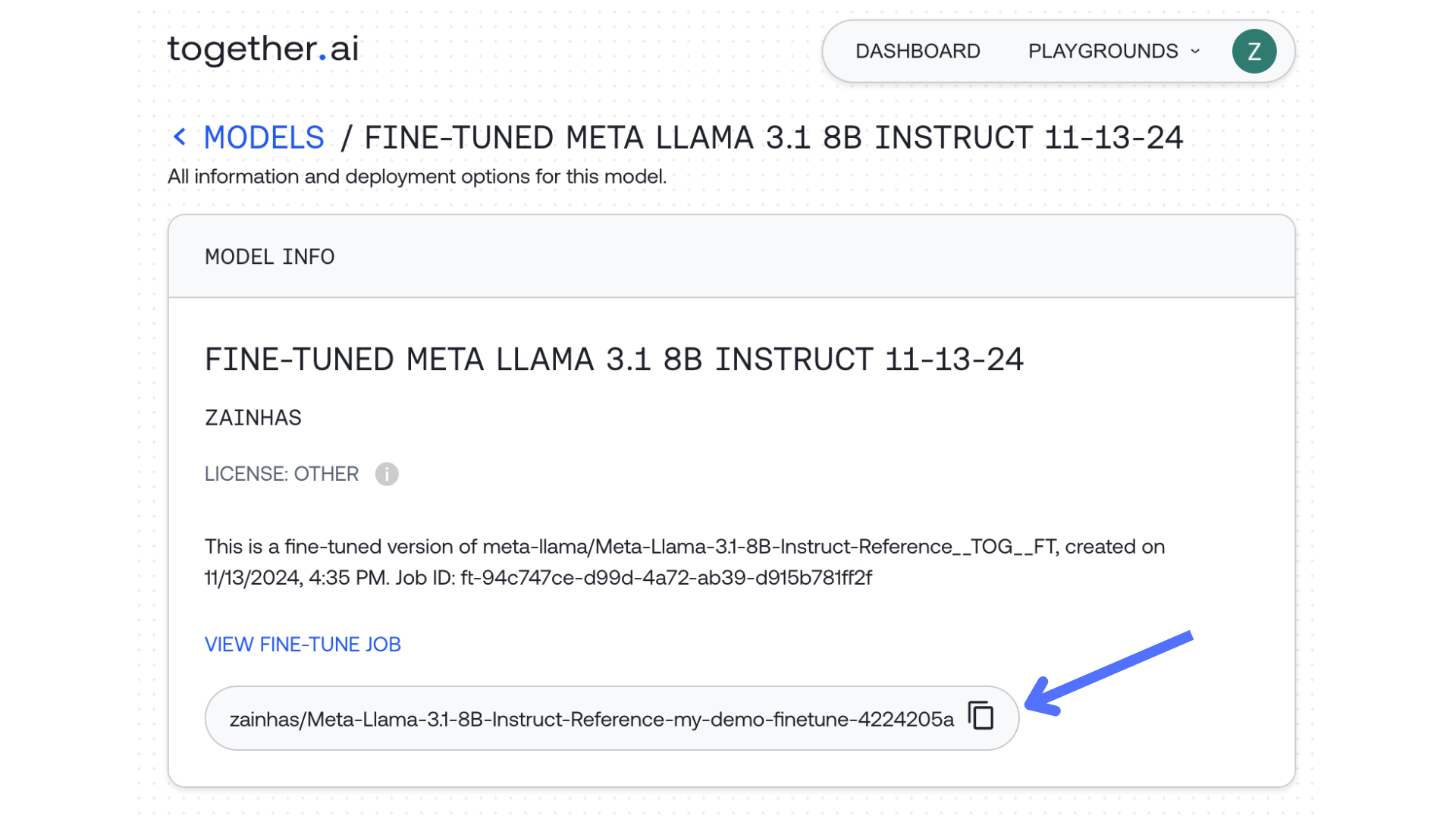

output_name and job_id in the response:

Step 3: Getting the output model

Once you submit the fine-tuning job you should be able to see the modeloutput_name and job_id in the response:

Step 4: Deploy for inference

Once the fine-tuning job is completed, you can deploy your model for inference using a dedicated endpoint. See Deploying a Fine-tuned Model for detailed instructions.Best Practices

- Data Preparation: Ensure your training data follows the correct JSONL format for your chosen model

- Validation Sets: Always include validation data to monitor training quality

- Model Naming: Use descriptive names for easy identification in production

- Monitoring: Track training metrics through the Together dashboard

Frequently Asked Questions

Which base models support LoRA fine-tuning?

Together AI supports LoRA fine-tuning on a curated selection of high-performance base models. See the supported models list for current options.What’s the difference between LoRA and full fine-tuning?

LoRA trains only a small set of additional parameters (typically 0.1-1% of model size), resulting in faster training, lower costs, and smaller output files, while full fine-tuning updates all model parameters for maximum customization at higher computational cost.How do I run inference on my LoRA fine-tuned model?

Once training is complete, deploy your model using a dedicated endpoint. See Deploying a Fine-tuned Model for instructions.Next Steps

- Explore advanced fine-tuning parameters for optimizing model performance

- Learn about uploading custom adapters trained outside Together AI

- Deploy your model with a dedicated endpoint