Data Preparation

Your dataset should contain examples with:- An

inputfield with messages in the conversational format. - A

preferred_outputfield with the ideal assistant response - A

non_preferred_outputfield with a suboptimal assistant response

JSONL, with each line structured as:

Preference-tuning does not support pretokenized datasets. Contact us if you need to use them for preference training.

Launching preference fine-tuning

Hyperparameters

-

Set

--training-method="dpo" -

The

--dpo-betaparameter controls how much the model is allowed to deviate from its reference (or pre-tuned) model during fine-tuning. The default value is0.1but you can experiment with values between0.05-0.9- A lower value of beta (e.g., 0.1) allows the model to update more aggressively toward preferred responses

- A higher value of beta (e.g., 0.7) keeps the updated model closer to the reference behavior.

-

The

--dpo-normalize-logratios-by-lengthparameter (optional, default is False) enables normalization of log ratios by sample length during the DPO loss calculation. -

The

--rpo-alphacoefficient (optional, default is 0.0) incorporates the NLL loss on selected samples with the corresponding weight. -

The

--simpo-gammacoefficient (optional, default is 0.0) adds a margin to the loss calculation, force-enables log ratio normalization (—dpo-normalize-logratios-by-length), and excludes reference logits from the loss computation. The resulting loss function is equivalent to the one used in the SimPO paper.

Note

- For LoRA Long-context fine-tuning we currently use half of the context length for the preferred response and half for the non-preferred response. So, if you are using a 32K model, the effective context length will be 16K.

- Preference fine-tuning calculates loss based on the preferred and non-preferred outputs. Therefore, the

--train-on-inputsflag is ignored with preference fine-tuning.

Metrics

In addition to standard metrics like losses, for DPO we report:- Accuracies — percentage of times the reward for the preferred response is greater than the reward for the non-preferred response.

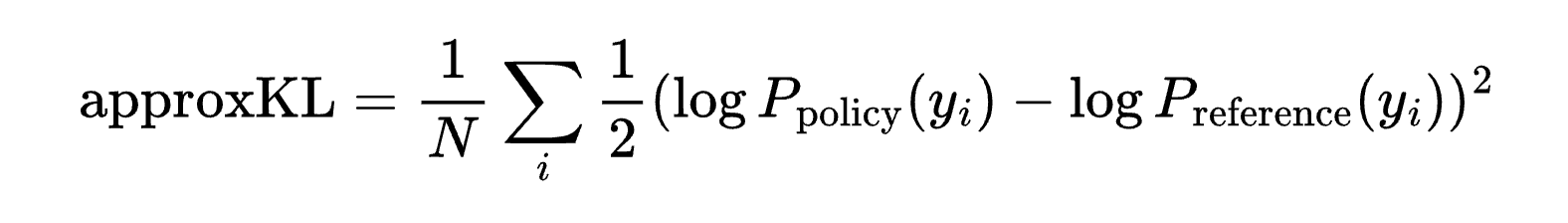

- KL Divergence — similarity of output distributions between the trained model and the reference model, calculated as:

Combining methods: supervised fine-tuning & preference fine-tuning

Supervised fine-tuning (SFT) is the default method on our platform. The recommended approach is to first perform SFT followed up by preference tuning as follows:- First perform supervised fine-tuning (SFT) on your data.

- Then refine with preference fine-tuning using continued fine-tuning on your SFT checkpoint.