import os

import json

from together import Together

from datetime import datetime

tools = [

{

"type": "function",

"function": {

"name": "get_date",

"description": "Get the current date",

"parameters": {"type": "object", "properties": {}},

},

},

{

"type": "function",

"function": {

"name": "get_weather",

"description": "Get weather of a location, the user should supply the location and date.",

"parameters": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "The city name",

},

"date": {

"type": "string",

"description": "The date in format YYYY-mm-dd",

},

},

"required": ["location", "date"],

},

},

},

]

def get_date_mock():

return datetime.now().strftime("%Y-%m-%d")

def get_weather_mock(location, date):

return "Sunny 68~82°F"

TOOL_CALL_MAP = {

"get_date": get_date_mock,

"get_weather": get_weather_mock,

}

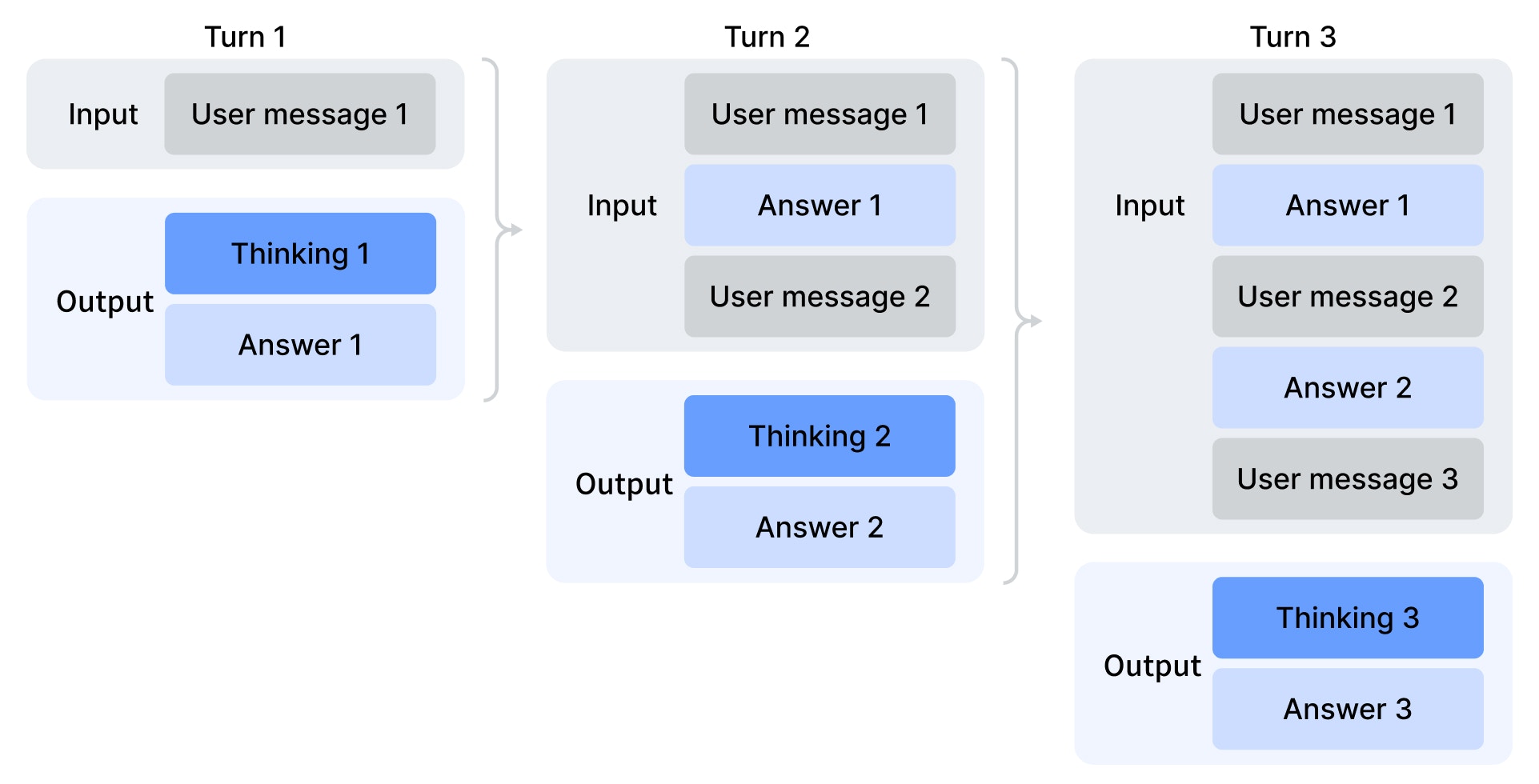

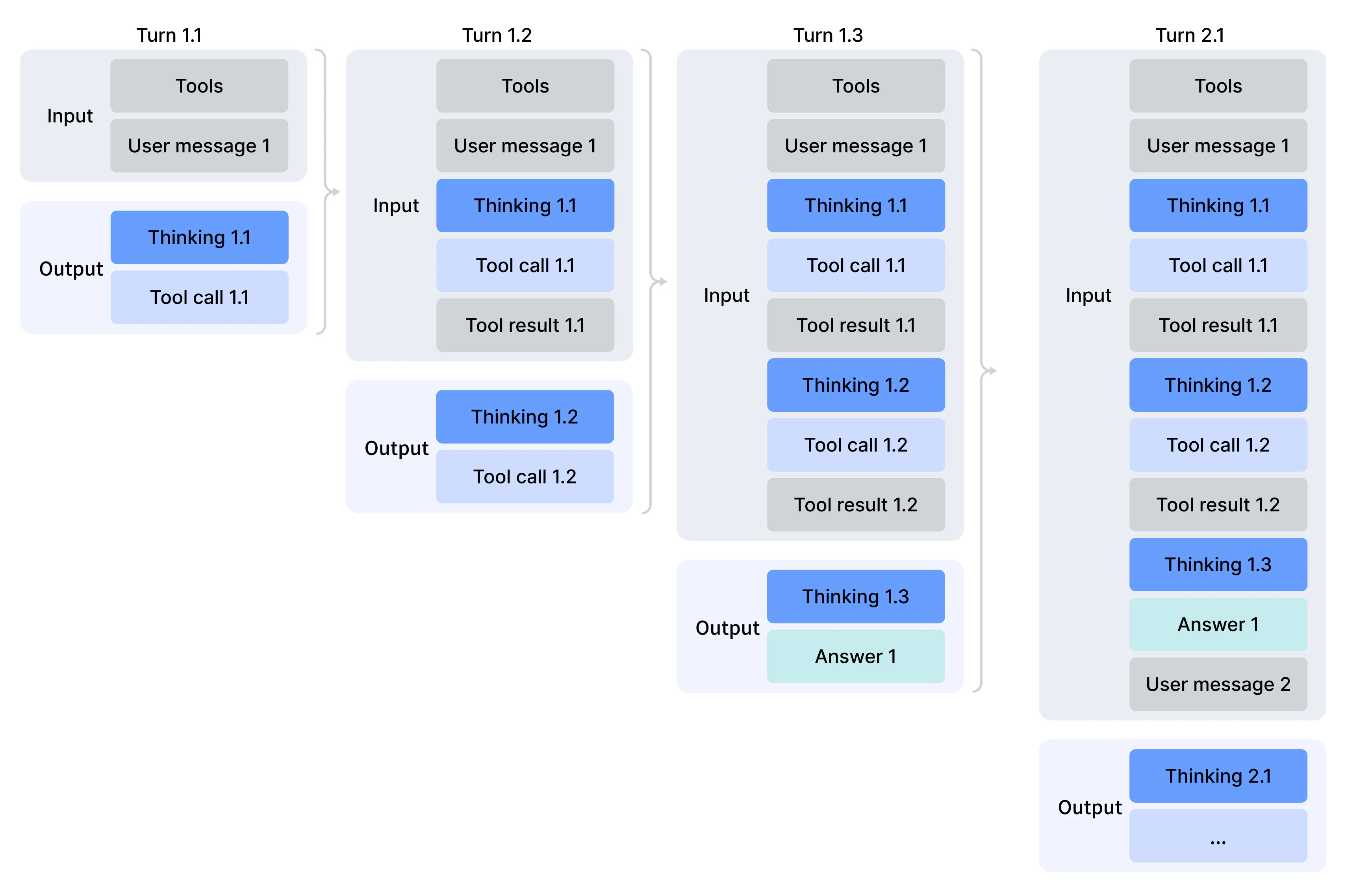

def run_turn(client, turn, messages):

sub_turn = 1

while True:

response = client.chat.completions.create(

model="deepseek-ai/DeepSeek-V4-Pro",

messages=messages,

tools=tools,

reasoning_effort="high",

)

choice = response.choices[0].message

reasoning = getattr(choice, "reasoning", None) or getattr(

choice, "reasoning_content", None

)

content = choice.content

tool_calls = choice.tool_calls

print(f"Turn {turn}.{sub_turn}")

print(f" reasoning = {reasoning}")

print(f" content = {content}")

print(f" tool_calls= {tool_calls}")

assistant_msg = {

"role": "assistant",

"content": content or "",

}

if reasoning:

assistant_msg["reasoning_content"] = reasoning

if tool_calls:

assistant_msg["tool_calls"] = [

{

"id": tc.id,

"type": "function",

"function": {

"name": tc.function.name,

"arguments": tc.function.arguments,

},

}

for tc in tool_calls

]

messages.append(assistant_msg)

if not tool_calls:

break

for tool in tool_calls:

tool_function = TOOL_CALL_MAP[tool.function.name]

tool_result = tool_function(**json.loads(tool.function.arguments))

print(f" tool result for {tool.function.name}: {tool_result}")

messages.append(

{

"role": "tool",

"tool_call_id": tool.id,

"content": tool_result,

}

)

sub_turn += 1

print()

client = Together()

messages = [

{"role": "user", "content": "How's the weather in San Francisco tomorrow?"}

]

run_turn(client, 1, messages)

messages.append(

{"role": "user", "content": "How's the weather in New York tomorrow?"}

)

run_turn(client, 2, messages)